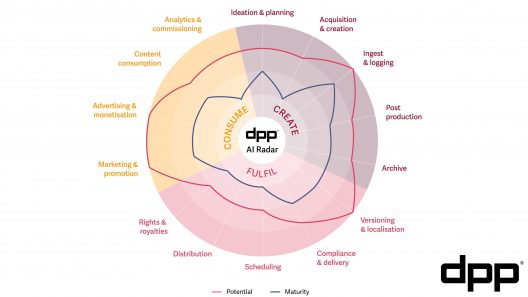

In December 2018, I had the pleasure of co-hosting a workshop called AI for Real, in which the DPP gathered 25 experts in London to survey the maturity of AI in the media industry. Then in 2021, as part of our Cloud for Media series, I wrote Automating Media, which again looked at real world use cases for AI driven automation in the media supply chain.

Here’s how I summarised the progress:

In 2018, the scale of hype around AI was matched only by the extent of the challenges. Three years later, progress has been significant, if still somewhat short of a complete revolution.

The findings at that time were later summarised as my Five Golden Rules of Media AI. And now we’re about to take the next step on the DPP’s AI journey with a new insight project called AI in Media: What does good look like? so it feels like an excellent time to review those golden rules, and ask: will they hold up in the face of the latest developments in AI?

1. Necessity is the mother of innovation

By 2021, hype had begun to subside, and companies focused on solving specific business problems with targeted solutions. The factory processes of media receipt, processing, and distribution became the logical epicentre of automation.

The push to launch huge libraries of content on international streaming platforms meant that quality control, versioning, and language services truly required automation to meet the business needs at the required scale.

Well, for all the hype earlier in 2023 about whether generative AI would ‘change creativity forever,’ the conversation seems already to have settled back into how large language models can assist with basic, but essential, business needs - such as software development, or more user friendly operational tools.

2. Obey the laws of (data) gravity

In 2010, David McCrory defined the concept of data gravity:

Consider Data as if it were a Planet or other object with sufficient mass. As Data accumulates (builds mass) there is a greater likelihood that additional Services and Applications will be attracted to this data. This is the same effect Gravity has on objects around a planet.

And data gravity has truly been at play with the development of AI. It is only as the internet and the cloud have enabled huge collections of media to be brought together, that it has become meaningfully useful to create and train machine learning models on these large bodies of data.

From today’s vantage point, the cloud was undeniably an important factor in the development of large AI models. But is it still as central now? Perhaps the next revolution be the deployment of smaller models on our everyday computing devices, such as our smartphones.

3. People are a robot’s best friend

The sweet spot for AI - at least in 2021 - was as a counterpart to humans. Few were running AI processes unobserved.

The interesting point of differentiation was between those using AI as the virtual supervisor (reviewing and quality checking human output) and those using AI as the virtual intern (creating work that humans check and improve).

2023 has seen an explosion of assistants, bots, and co-pilots. So is the talk of replacing humans now over once and for all? Perhaps not; without doubt there will be tasks and roles which will, in time, be fully automated by AI. But the basic principle holds up strongly in most cases, and I suspect that it will for many years to come.

4. Share risk and reward

The value of ML models comes from both the technology and the training data. In 2018 there was a great deal of disagreement and uncertainty about the value exchange between media and technology companies.

That uncertainty feels fresh again today, as this balance has been brought into sharp focus by the rise of Large Language Models (LLMs). But even back in 2021, we were seeing good precedents for commercial models which respect both parties, as media and technology companies began to partner using agreements with shared commercial incentives.

However, the past year has seen a new level of concern about both the provenance of AI generated media, and its copyright implications. While our 2021 analysis holds up well for smaller customised models, the advent of large models trained on vast corpuses of internet-scraped data have changed the conversation. So it will be interesting to gauge media companies’ attitudes towards AI monetisation going forward.

5. Seek revenue, not savings

Perhaps more than any other, this rule tells the story of the media industry in 2021. As global players sought subscribers above all else in the fight for streaming dominance, the use of AI was firmly focused on creating new revenue opportunities.

It goes without saying that companies want to reduce costs, and automation can help. But in almost every use case, a more important factor emerges: Automation speeds up processes, reducing time to market, and therefore time to revenue.

So has this changed in the more cost-conscious context of 2023? The dynamics and cost models of AI don’t look dramatically different. But the desire for cost savings is far stronger. Perhaps speed and revenue generation are still important, but it seems certain that demonstrable return on investment will be front of mind in this year’s conversation.

In fact, we've already kicked off the conversation in the DPP podcast.

Join us for the next stage

In two short years, the landscape of AI in media has changed fundamentally. Another wave of hype has risen and, perhaps, subsided. A new generation of AI tools is now widely available. And every media company I speak to is investing in - or at least closely watching - the development of specialist AI.

So now is the time for the DPP to take stock again and ask, AI in Media: What does good look like?

This new report, coming in early 2024, will be based on workshops and conversations taking place in November and December. If you work for one of the 500+ DPP member companies, and you’d like to take part, please reach out.

Get involved

To find out more or to get involved with this work, please contact:

If your company is not a DPP member, you can learn more about the benefits of membership, or contact Michelle to discuss joining.

If your company is not a DPP member, you can learn more about the benefits of membership, or contact Michelle to discuss joining.